Getting Started with Python

Provides quick access, best practice, and useful resources for Python programming.

Quick Access

- Python is a programming language.

- Python Documentation

- Python Tutorials on W3Schools and TutorialPoints

- pip is the package installer for Python.

- virtualenv is a tool to create isolated Python virtual environments.

- conda creates a virtual environment that can install Software Development Kits (SDK). It replaces pip and virtualenv, but you can still use pip if conda does not have certain packages. You can either use miniconda or anaconda.

- Python gitignore is the list of files that should be ignored by git when using Python.

- WTF Python collects confusing syntaxes of Python.

- pdb is the Python debugger.

Python 2.x is no longer supported, so we now use Python 3.x instead. In old systems, the python command may refer to Python 2.x, so you’ll need to use python3 instead. To check which version the python command refers to, type ` Python -V`.

Python 3.9 is very new at the time of writing, and may not be compatible with some packages, so Python 3.6-3.8 is recommended.

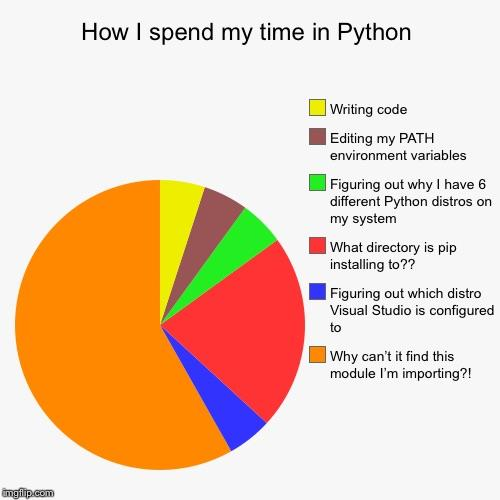

As a programming language, Python is superior on its readibility, it also has a large community for maintatining useful libraries. However, its environment is notoriously difficult to set up.

Directly installing packages contaminates the python environment in your computer. Always create a virtual environment (either by virtualenv, conda, or Docker) for each of your projects.

Installation

Windows

Some other tutorials may suggest you install Python by the official installer. However, such an approach installs Python globally, and you may install packages globally on the Command Prompt by accident and contaminate your computer environment.

I suggest installing Anaconda instead, and let Anaconda manage the python versions for you. You can follow the exact installation steps here.

After installing, you should work in the Anaconda Prompt for all future commands. Typing python on the Command Prompt will not work. Alternatively, if you prefer a GUI interface, you can use Anaconda Navigator.

Launch Anaconda Prompt and follow the next section.

MacOS

You can install Anaconda here. After installation, you can open Terminal and follow the next section.

Linux

Anaconda: (You may want to change the filename to the newest version)

- For 64-bit (x86_64 / x64 / amd64 / intel64)

wget https://repo.anaconda.com/archive/Anaconda3-2022.10-Linux-x86_64.sh bash Anaconda3-2022.10-Linux-x86_64.sh - For 32-bit (x86 / i386 / i686)

wget https://repo.anaconda.com/archive/Anaconda3-2022.10-Linux-x86.sh bash Anaconda3-2022.10-Linux-x86.sh - For ARM (Untested)

wget https://repo.anaconda.com/archive/Anaconda3-2022.10-Linux-aarch64.sh bash Anaconda3-2022.10-Linux-aarch64.sh

Virtualenv:

sudo apt install -y virtualenv

After installation, open Terminal and follow the next section.

Virtual Environment

For beginners, I recommend Anaconda instead of virtualenv since it’s pretty stable on all Operating Systems. Furthermore, Anaconda can install SDKs in the virtual environment, while virtualenv cannot.

However, Anaconda’s environment is stored globally, which is sometimes not preferable for small projects. Therefore, I often use virtualenv for small projects.

Create Virtual Environment

Anaconda:

# Create a python3.8 virtual environment named 'testenv'

conda create -n testenv python=3.8 -y

conda activate testenv

# Make sure your terminal does not start with '(base)' or start with nothing.

# Install packages and run code

# conda install ...

conda deactivate

Virtualenv:

# Assume python3.8

virtualenv -p /usr/bin/python3.8 venv

source venv/bin/activate

# Install packages and run code here

# pip install ...

deactivate

Export/Import Environment

Anaconda:

# Export (while in virtual environment)

conda env export > environment.yml

# Import (outside virtual environment)

conda env create -f environment.yml

Virtualenv:

# Export (while in virtual environment)

pip freeze > requirements.txt

# Import (while in virtual environment)

pip install -r requirements.txt

The auto-generated environment exports are not human-friendly since it outputs an entry for every single package. If you wish to read and modify the environment exports, you may want to write an environment config from scratch.

Remove Environment

Anaconda:

# Assume the virtual environment's name is 'testenv'

conda env remove -n testenv

Virtualenv:

# Assume virtual environment is located at './venv'

rm -rf venv

Clean Caches

If your computer runs out of space when using Anaconda, you may want to clean all caches and unused packages:

# Remove index cache, lock files, unused cache packages, and tarballs.

conda clean -a -y

If you use pip, you can remove all items from the cache:

pip cache purge

Without Virtual Environment

If you didn’t use virtual environments, the installed packages are stored in ~/.local by default. If you encountered any unsolvable issues, you may consider back up the directory and start using Python from scratch:

mv ~/.local ~/.local.bak

GPU Acceleration

This section assumes you use NVIDIA GPU. For non-NVIDIA GPU devices, it may be possible for you to install OpenCL and somehow make it compatible with TensorFlow/PyTorch. But I haven’t tried it out by myself.

You will need to install a graphics card driver, CUDA, cuDNN, TensorFlow, PyTorch for GPU acceleration. The version number of these installations are all coupled together. A version mismatch will make your code fall back to CPU.

Install Driver and Check Driver Version

-

Windows:

Check version at

NVIDIA Control Panel > Help > System Information > Display > Driver version.Otherwise, install NVIDIA GPU driver.

-

MacOS:

Mac does not use NVIDIA GPUs.

-

Linux (Take Ubuntu as Example): Check the version by:

ubuntu-drivers devicesYou may install the drivers by:

# install recommended sudo ubuntu-drivers autoinstall # or install the preferred and supported version for your card sudo apt install -y nvidia-driver-470

Check Supported CUDA Versions

Check if your GPU driver is compatible with the CUDA version you want to use. (Scroll down to Table 2 and Table 3)

In some cases, you may want to run a specific version of TensorFlow / PyTorch, you can find the corresponding CUDA/cuDNN requirements for a specific Tensorflow version, or a specific PyTorch version (including torchvision, torchaudio versions).

For information regarding compute capability, please refer to this page on Wikipedia.

Installing CUDA and cuDNN by Anaconda

If you are using Anaconda and your GPU driver supports CUDA 10.1, follow the command:

- Linux:

conda install -y cudatoolkit=10.1 conda install -y cudnn=7.6.5 conda install -y pytorch==1.7.1 -c pytorch conda install -y tensorflow-gpu==2.2.0 -c anaconda conda install -y gym -c conda-forge - Windows:

conda install -y cudatoolkit=10.1 conda install -y cudnn=7.6.5 conda install -y pytorch==1.7.1 -c pytorch conda install -y tensorflow-gpu==2.3.0 -c anaconda conda install -y gym -c conda-forge

If your GPU driver does not support CUDA 10.1, change the commands above to the latest supported CUDA version. And change cuDNN, PyTorch, TensorFlow versions following the documentations:

Installing CUDA and cuDNN Manually

In Anaconda, the newest version of CUDA may not be available. In such cases, you will want to install CUDA manually and install the corresponding cuDNN.

The installation script should look like below: (Take CUDA 11.0 and cuDNN 8 as example)

mkdir -p tmp && cd tmp

wget -q https://developer.download.nvidia.com/compute/cuda/11.0.3/local_installers/cuda_11.0.3_450.51.06_linux.run

sudo sh cuda_11.0.3_450.51.06_linux.run --silent --toolkit --override

wget -q https://developer.download.nvidia.com/compute/redist/cudnn/v8.2.1/cudnn-11.3-linux-x64-v8.2.1.32.tgz

tar -xzvf cudnn-11.3-linux-x64-v8.2.1.32.tgz

sudo cp cuda/include/cudnn*.h /usr/local/cuda/include

sudo cp -P cuda/lib64/libcudnn* /usr/local/cuda/lib64

sudo chmod a+r /usr/local/cuda/include/cudnn*.h /usr/local/cuda/lib64/libcudnn*

cd .. && rm -rf tmp

After the installation, you will observe the following message:

Please make sure that

- PATH includes /usr/local/cuda-11.3/bin

- LD_LIBRARY_PATH includes /usr/local/cuda-11.3/lib64, or, add /usr/local/cuda-11.3/lib64 to /etc/ld.so.conf and run ldconfig as root

You can add them by appending the following to your ~/.bashrc:

export PATH=/usr/local/cuda-11.3/bin:$PATH

export LD_LIBRARY_PATH=/usr/local/cuda-11.3/lib64:$LD_LIBRARY_PATH

Alternatively, you can follow the guide here: Tensorflow Install GPU support. There’s also an official guide that teaches you to do everything manually: CUDA Installation Guide, cuDNN Installation Guide.

Let CUDA Install the Driver For You

This step is only necessary if you encountered issues that are related to the apt driver.

If you have installed nvidia drivers through apt, you might want to uninstall them before installing CUDA:

sudo apt-get remove --purge nvidia-*

sudo apt autoremove

sudo apt autoclean

sudo reboot

If you are using a desktop environment, you may want to use Ctrl + Alt + F3 to enter TTY mode, and disable the desktop as follows, so that the CUDA installer can install the driver for you:

sudo service gdm stop

# and enter TTY mode and run the CUDA installer

sudo reboot

See this post and this post for more information.

Access GPU from PyTorch and TensorFlow

You can test your installation by the following scripts:

git clone https://github.com/abstractionrevealed/python-getting-started.git

cd python-getting-started

# Test GPU with PyTorch

python test_pytorch_gpu.py

# Test GPU with TensorFlow 2

python test_tensorflow_gpu.py

If any error occurs, it may be a version mismatch on the libraries. Try install again, starting from uninstalling everything. The following scripts demonstrate some simple tasks in ML:

# Train a Simple CNN Classifier on MNIST

python mnist_convnet.py

# Train REINFORCE on Cartpole

python reinforce.py

Use CPU (If you don’t want to use GPU)

If you are using Anaconda, follow the command (without setting up cudatoolkit):

conda install -y pytorch -c pytorch

conda install -y tensorflow -c anaconda

# gym is for Reinforcement Learning

conda install -y gym -c conda-forge

Diagnostics

After setting up everything correctly, some issues may still occur.

-

UserWarning: CUDA initialization: CUDA unknown errorTest with Python:

import torch print(torch.cuda.is_available())Error message:

UserWarning: CUDA initialization: CUDA unknown error - this may be due to an incorrectly set up environment, e.g. changing env variable CUDA_VISIBLE_DEVICES after program start. Setting the available devices to be zero.Solution: (reference)

sudo rmmod nvidia_uvm sudo modprobe nvidia_uvm -

NO_PUBKEY A4B469963BF863CCTest with apt:

sudo apt updateError message:

Err:1 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu1804/x86_64 InRelease The following signatures couldn't be verified because the public key is not available: NO_PUBKEY A4B469963BF863CCSolution: (reference)

sudo apt-key adv --fetch-keys https://developer.download.nvidia.com/compute/cuda/repos/ubuntu1804/x86_64/3bf863cc.pub sudo apt-key adv --fetch-keys https://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu1804/x86_64/7fa2af80.pub

Run Code

The native way

In your virtual environment, type:

# Assume you want to run test.py

python test.py

I recommend using VSCode as your code editor and install PyLance for a better coding experience. For vim lovers, you might want to install VSCode Vim.

If you are using VSCode jupyter notebook and want to kill all python kernels that didn’t exit successfully:

killall python

If you don’t use an IDE, but want to know all properties and methods of an object obj:

dir(obj)

Jupyter Notebook / Jupyter Lab (.ipynb)

The .ipynb file can be opened in Jupyter Notebook / Jupyter Lab or VSCode. Make sure to launch the Jupyter Lab instance inside your virtual environment.

Jupyter Lab is an enhanced version of Jupyter Notebook. For configurations, you can see the doc for more info

Python Interactive Window in VSCode

Allow you to edit a .py file as editing a Jupyter Notebook. You can learn to use it here. You can select the preferred virtual environment in the status bar.

I like this method a lot since it maintains the flexibility and REPL (Read–eval–print loop) of Jupyter Notebooks, while retaining the power of VSCode autocompletion, type/parameter hints, and more.

Custom Server

You can ssh into your server. To avoid the system kills your program when your ssh session closes, use tmux to ensure your model continues training even when your ssh session is closed due to network instability. For Jupyter Notebook / Jupyter Lab, launch the Jupyter session inside tmux and access the Jupyter server through port forwarding or SSH tunneling. For VSCode fans, you may want to use VSCode Remote Development.

Google Colab

- Follow the Quick Start Guide. The UI is similar to Jupyter Notebook.

- Make sure to enable hardware acceleration when needed.

- Get familiar with the I/O APIs. Your Google Drive acts as the hard drive on the device.

- Be aware of the resources limits and remember to save checkpoints.

- Keep in mind that Google Colab notebooks may use their API that isn’t available in normal Jupyter Notebooks, which may be a hassle when running the code locally.

Other Online Services

Debugging

You can use an IDE and utilize its debugger feature. Alternatively, you can launch pdb by the following command:

python3 -m pdb <SCRIPT_NAME>.py <OPTIONS>

The breakpoints can be written directly in code by:

import pdb; pdb.set_trace()

Packaging and Maintenance

- unittest is the default testing tool for Python.

- pytest is a widely adopted alternative to unittest.

- flake8 can lint your code to maintain coding-style consistency.

- pylint can also lint your code.

- autopep8 can fix simple linting issues for you.

- mypy is used for static type checking.

- coverage shows the testing coverage for your project.

- tox and nox are two most widely used automated testing tools for developing Python packages. They support testing the package on multiple Python versions and environments with a single command.

- Sphinx is the package used to generate the Python documentation.

- matplotlib.sphinxext.plot_directive is for plotting figures with code.

- sphinx.ext.viewcode is for viewing source code.

- sphinx.ext.autodoc is for automatically generate doc for module.

- sphinx.ext.doctest is for testing inline code.

- sphinx.ext.intersphinx is for reference external libraries.

- sphinx_tabs.tabs is for using tab pages.

- nbsphinx is for rendering IPython notebooks (

.ipynb) as a document web page.

- Read the Docs can help host your documentation site.

- Python Packaging User Guide provide instructions on uploading a package to PyPI.

I usually use pytest for unit testing, mypy for static type checking, coverage to show test coverages, flake8 and pylint for linting code, tox to test with multiple Python versions, sphinx (with plugins) to automatically generate documentation.

You may want to refer to this package I developed. You can ask me to provide more details on how to perform packaging and maintenance.

Convert Notebook Files to Other Format

ipython nbconvert --to html notebook.ipynb

ipython nbconvert --to python notebook.ipynb

Docker Containers

You can skip the installation of Anaconda/CUDA/cuDNN and directly install Docker with nvidia-docker, while the GPU driver is still required. Virtual environments are not necessary when Docker is used. (Since the docker instance itself is an isolated environment).

You should install Docker, NVIDIA Docker, NVIDIA Container Runtime, Modify /etc/docker/daemon.json, and finally restart docker service and test if you have installed them correctly.

The installation command should be something like this:

sudo apt install -y docker.io

sudo usermod -aG docker $USER

# Re-login to update user group information

sudo apt install -y nvidia-docker2

sudo apt install -y nvidia-container-runtime

# Check /etc/docker/daemon.json

sudo pkill -SIGHUP dockerd

sudo systemctl restart docker

docker run --rm --gpus all nvidia/cuda:10.2-base nvidia-smi

You will want to install

docker.ioinstead ofdocker-cein most cases. See this post for more details.

In case of Unable to locate package nvidia-docker2, run (source):

sudo apt install -y curl

distribution=$(. /etc/os-release;echo $ID$VERSION_ID) \

&& curl -fsSL https://nvidia.github.io/libnvidia-container/gpgkey | sudo gpg --dearmor -o /usr/share/keyrings/nvidia-container-toolkit-keyring.gpg \

&& curl -s -L https://nvidia.github.io/libnvidia-container/$distribution/libnvidia-container.list | \

sed 's#deb https://#deb [signed-by=/usr/share/keyrings/nvidia-container-toolkit-keyring.gpg] https://#g' | \

sudo tee /etc/apt/sources.list.d/nvidia-container-toolkit.list

sudo apt update

sudo apt install -y nvidia-docker2

sudo apt install -y nvidia-container-runtime

If you cannot run the CUDA docker image. Check your /etc/docker/daemon.json. It should look something like this:

{

"runtimes": {

"nvidia": {

"path": "nvidia-container-runtime",

"runtimeArgs": []

}

},

"default-runtime": "nvidia"

}

You may be interested in the pre-built NGC containers:

- NGC Catalog

- NGC Container User Guide

- CUDA Application Compatibility

# If installed CUDA nvidia-smi # inspect GPU driver version # Or with Ubuntu command ubuntu-drivers devices # show supported drivers -

Optimized Frameworks Release Notes See all used libraries in the container. For example, version 20.03 of Tensorflow/Pytorch is the newest image that is backward compatible with CUDA 10. If your graphics card’s driver can only support up to CUDA 10, version 20.04 isn’t compatible with your graphics card’s driver thus cannot be used.

The table in the pages below is good for finding a suitable image tag. The release notes contains the detailed library / SDK version of monthly released images.

Frequently Used Packages

numpyfor matrix calculationscipyfor those functions not supported bynumpy.pandasfor storing data as a spreadsheet.matplotlibfor plotting.seabornfor more readable plotting withpandasDataframe, this package is based onmatplotlib; thus mostmatplotlibcommands are compatible.tensorflow,pytorch(pip package name istorch),keras(included in tensorflow),mxnet, andjax(installation guide) for deep learning training.gymfor RL environments.- etc.

Epilogue

If you have any questions or have spotted some typos/errors, please leave a comment below.

Finally, congratulations on finishing reading this article. You should feel less pain when configuring Python environments in the future. If you forget something, feel free to use the site search function for quick reference.

Image Source: Reddit

Comments are configured with provider: disqus, but are disabled in non-production environments.